Protecting Kids Online: Why Body Analysis Tools Should Be Off-Limits

The internet is full of tools that promise to analyze photos and turn appearance into numbers. For adults, those tools are often framed as fitness or self-improvement.

Children are a different story. When a person is under 18, privacy, consent, and interpretation risks rise fast. This is why body analysis tools should be off-limits for minors.

What Body Analysis Tools Actually Do

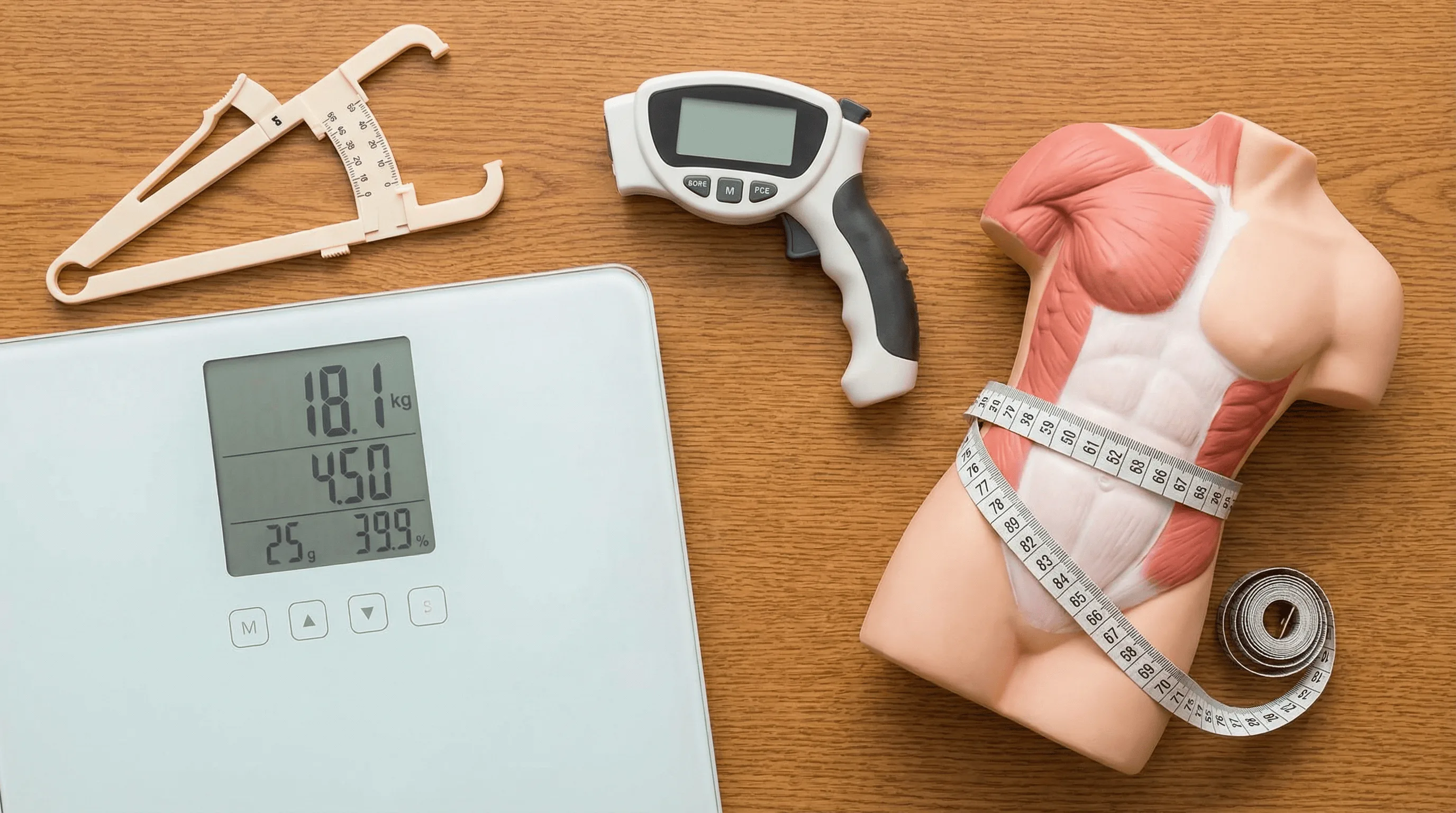

Body analysis tools cover a wide range of features. Some estimate body fat from a photo. Some assess shape, posture, or proportions. Others score facial symmetry or physical traits from visual cues.

Even for adults, these outputs are estimates, not medical measurements. They can be useful for trend tracking, but they are still approximations.

The mistake is assuming that if a tool seems reasonable for adults, it must also be appropriate for teenagers or younger children. That assumption breaks down quickly.

Children Are Still Developing

Children are not smaller adults. Their bodies are changing constantly through growth spurts, puberty, and normal developmental variation.

A number that looks precise on a screen can be misleading in this context. A normal stage of development can be interpreted as a problem when it is not.

Once a score exists, people tend to treat it as objective. That is risky when the underlying signal is unstable and the person is still growing.

Most Tools Are Built For Adults, Not Minors

Most consumer body analysis tools are designed around adult data and adult use cases. They are rarely validated as pediatric tools.

The output can still look authoritative because it is shown as a percentage or score, but surface precision does not guarantee that the model is suitable for children.

Adults may choose to use these tools while understanding limits. Children usually do not make that choice themselves.

Privacy Risks Are Bigger When Kids Are Involved

Uploading any photo raises privacy questions: where is the image stored, how long is it kept, and whether it is reused for model training or shared with third parties.

Those risks are more serious when the image belongs to a child. Children cannot meaningfully consent to creating a long-term digital record about their body.

Parents building a safer digital environment can also use resources like Safe Search Kids for practical online safety guidance.

Body Image Harm Can Start Earlier Than People Think

Kids already grow up in environments full of comparison and appearance pressure. Adding body-scoring tools can intensify that pressure.

A result that adults see as casual can stick with a child. They may internalize the message that their body should be monitored, measured, and judged.

Health support is one thing. Early obsessive body awareness is another. This line matters.

Consent Is Not Just A Checkbox

Informed consent requires understanding long-term implications. Children cannot do this the same way adults can.

Parents make many decisions on behalf of children, but good judgment includes restraint when a tool may create more harm than benefit.

Some technologies are better left unused in childhood contexts, even if they are popular with adults.

What Adults Should Focus On Instead

If the goal is to support a child's health, better signals exist: sleep quality, activity level, energy, confidence, and overall wellbeing.

If there is a genuine health concern, that conversation belongs with qualified professionals, not image-based consumer scoring tools.

- Prioritize habits and routines over appearance-based scores.

- Encourage sports and movement for skill and enjoyment.

- Protect privacy by minimizing photo uploads to unknown tools.

- Keep body conversations supportive, not evaluative.

Bottom Line

Not everything that can be measured should be measured. Body analysis tools may have a role for informed adults, but they are not appropriate for children.

Protecting kids online is not only about blocking obvious threats. It is also about setting clear boundaries around tools that can affect privacy, development, and self-image.